Loading...

ScraperBot

Google Maps Business Data Extractor

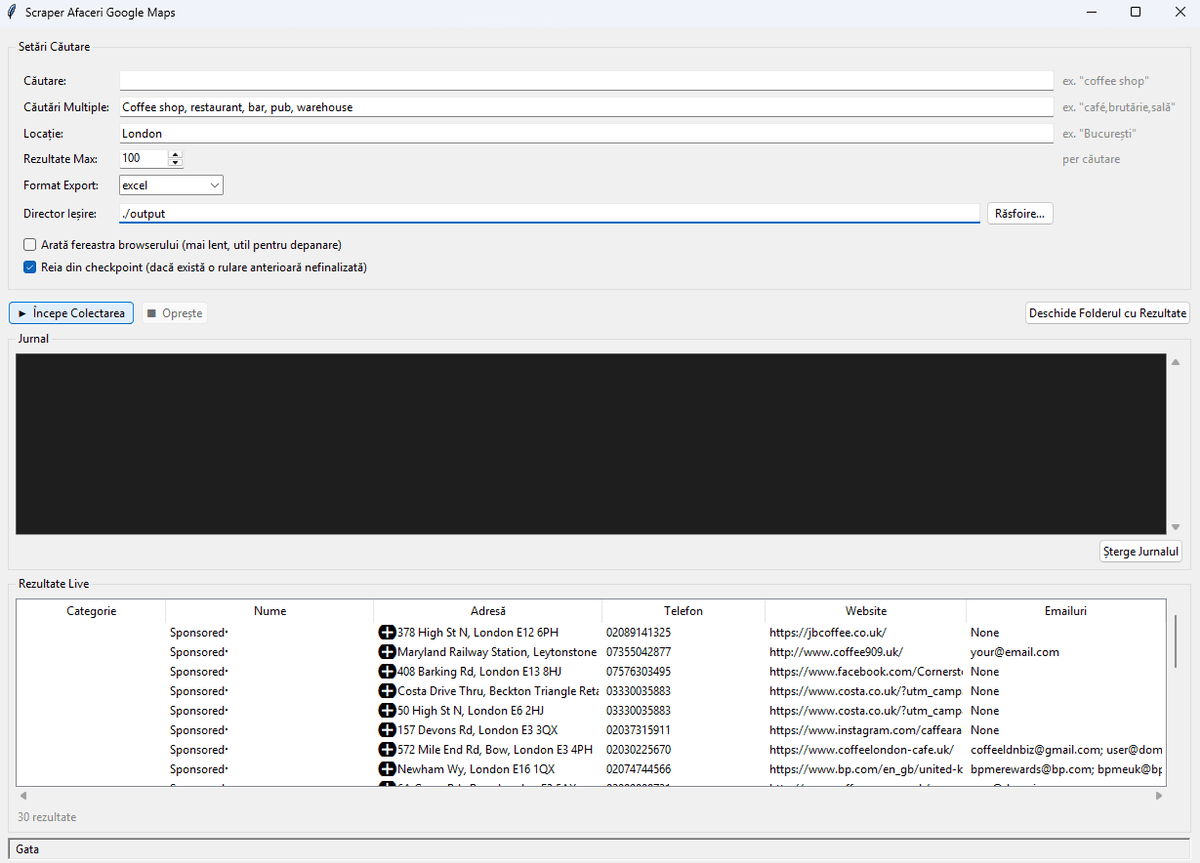

Automated Google Maps scraper with parallel processing, email extraction, CLI + GUI interfaces, and Excel/JSON export.

Overview

ScraperBot is a production-ready Python tool that automatically extracts detailed business information from Google Maps search results. It's designed for business researchers, lead generation specialists, and sales teams who need to compile business contact databases at scale.

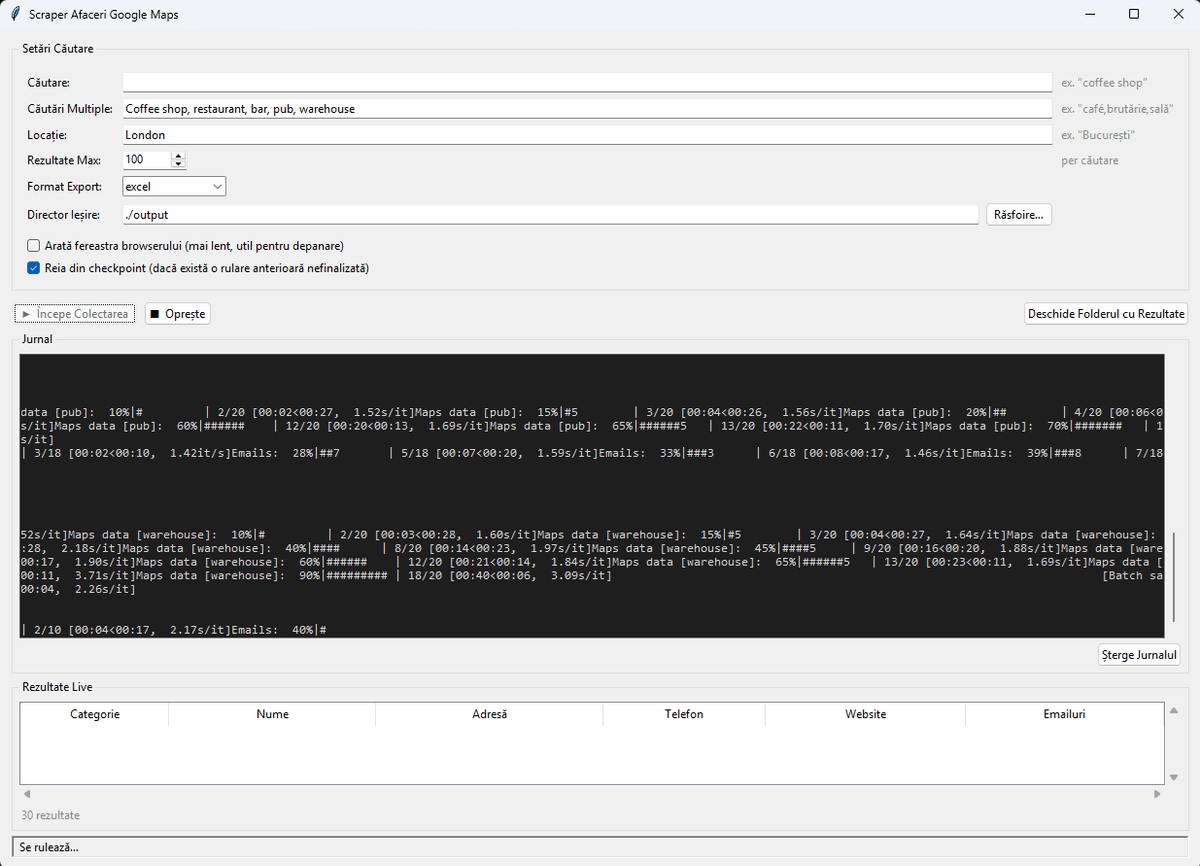

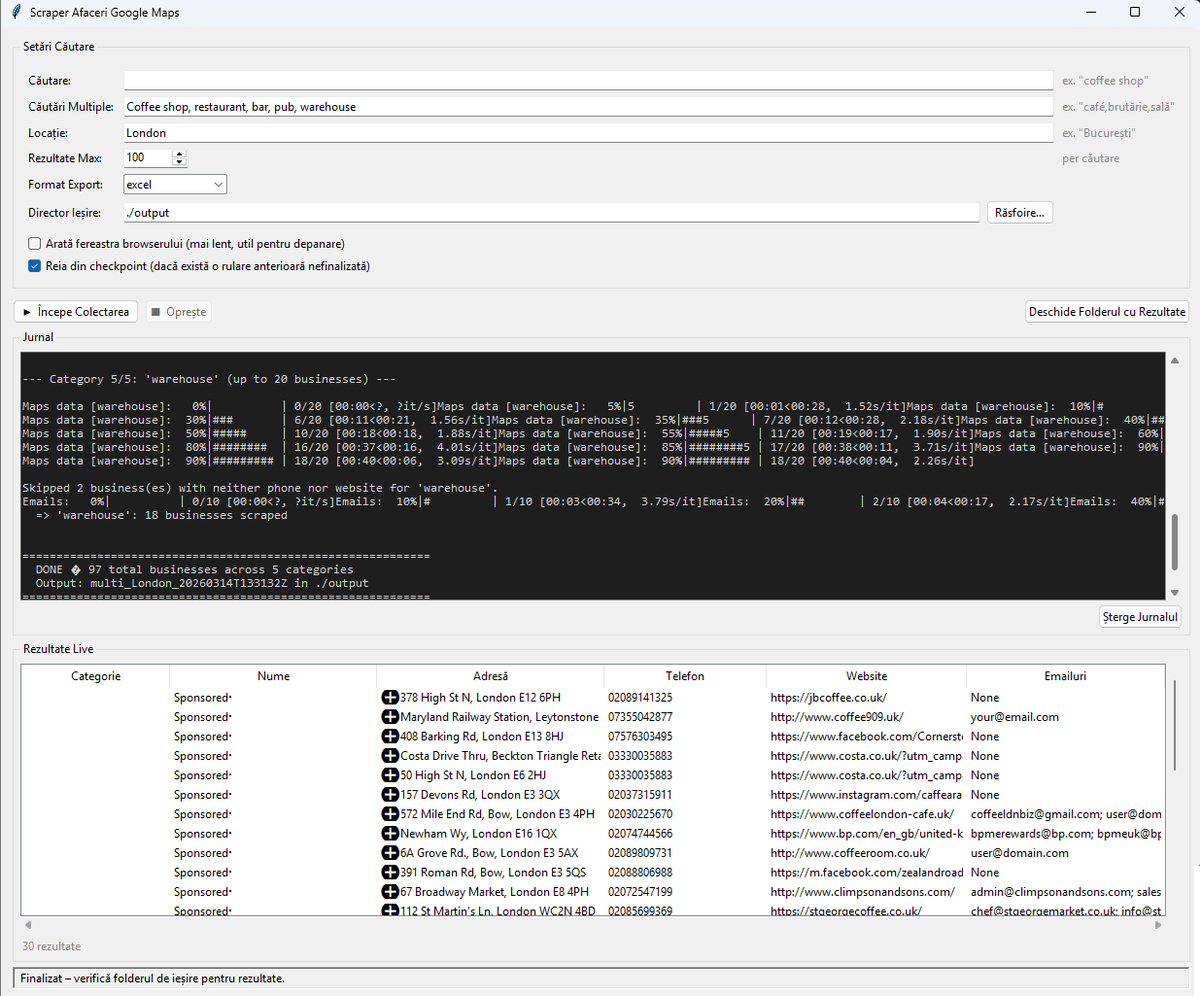

The scraper supports single and multi-category searches (up to 10 categories per run), parallel processing with up to 3 worker threads, and a checkpoint/resume system that saves progress every 10 businesses. If interrupted, scraping resumes exactly where it left off.

A standout feature is the deep email extraction pipeline that harvests emails from business websites using multiple methods - mailto links, CloudFlare email obfuscation decoding, JSON-LD structured data parsing, and regex scanning - all with validation to filter junk addresses. The tool provides both a full CLI with interactive prompts and a tkinter GUI with a live results table and real-time log viewer.

Python Developer

13+ tools

2026

The Problem

Sales teams and business researchers needed to compile business contact databases from Google Maps but were spending hours manually copying information. Existing scraping tools were unreliable, got blocked quickly, and couldn't extract email addresses from business websites.

Key Features

Multi-Category Scraping

Search up to 10 business categories in any location with combined output.

Parallel Processing

Up to 3 worker threads for faster data collection across categories.

Deep Email Extraction

Harvests emails via mailto, CloudFlare decode, JSON-LD, and regex with validation.

Checkpoint & Resume

Auto-saves progress every 10 businesses. Resume interrupted scrapes seamlessly.

Dual Interface

Full CLI with interactive prompts plus a tkinter GUI with live results table.

Export Formats

JSON and Excel output with per-category sheets for multi-category scrapes.

My Approach

I built a robust Python tool with anti-detection measures, parallel processing for speed, and a deep email extraction pipeline that harvests addresses from multiple sources (mailto links, CloudFlare decode, JSON-LD, regex). A checkpoint system ensures no data is lost if the scrape is interrupted.

Challenges Overcome

- Bypassing Google Maps anti-bot detection using playwright-stealth and human-like scrolling patterns

- Building a multi-method email extraction pipeline that handles CloudFlare email obfuscation

- Implementing a checkpoint/resume system that saves progress every 10 businesses

- Creating both a CLI and tkinter GUI that share the same scraping engine without code duplication

Screenshots

Interactive Demo

Since ScraperBot is a desktop Python application, here's a simulated terminal session showing how it works.

Tech Stack

Language

Scraping

Data Processing

GUI

Techniques

Results & Metrics

Businesses in <30 Min

Data Accuracy

Categories per Run

“ScraperBot processes thousands of leads in minutes. Best automation tool we've ever had.”

Michael Thompson

CEO, LeadGen Agency

Lessons Learned

- 1.

Anti-detection is an arms race - building human-like behaviour patterns matters more than rotating proxies.

- 2.

Checkpoint systems should be the first thing you build in any data pipeline, not an afterthought.

- 3.

Dual interfaces (CLI + GUI) work best when the core logic is completely decoupled from the presentation layer.

Interested in a similar solution?

Let's discuss how I can build something like this for your business.